It sounds like a script from the Netflix futuristic dystopia Black Mirror. Chatbots now ask: “How can I help you?” The reply typed in return: “Are you human?” “Of course I am human,” comes the response. “But how do I know you’re human?” And so it goes on.

Holding the people behind AI accountable

The so-called Turing Test where people question a machine’s ability to imitate human intelligence is happening right now. Powerful artificial intelligence (AI), pumped up on ever-complex algorithms and fed with petabytes of data, as well as billions of pounds in investment, is now “learning” at an exponential rate. AI is also increasingly making decisions about peoples’ lives.

This raises many burning ethical issues for businesses, society and politicians, as well as regulators. If machine-learning is increasingly deciding who to dole out mortgages to, tipping off the courts on prosecution cases or assessing the performance of staff and who to recruit, how do we know computerised decisions are fair, reasonable and free from bias?

“Accountability is key. The people behind the AI must be accountable,” explains Julian David, chief executive of TechUK. “That is why we need to think very carefully before we give machines a legal identity. Businesses that genuinely want to do good and be trustworthy will need to pay much more attention to ethics.”

Businesses that genuinely want to do good and be trustworthy will need to pay much more attention to ethics

The collapse of Cambridge Analytica over data harvesting on social networks, as well as the General Data Protection Regulation, now in force at the end of May, are bringing these issues to the fore. They highlight the fact that humans should not serve data or big business, but that data must be used ultimately to serve humans.

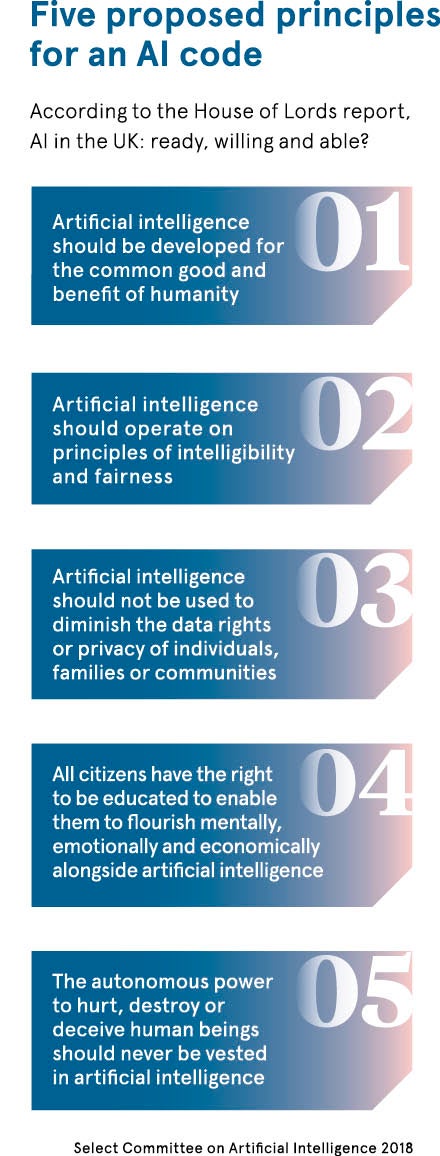

“The general public are starting to kick back on this issue. People say they do not know anything about EU law and information governance, but they do know about data breaches and scandals like the one with Facebook,” says Lord Clement-Jones, chair of the House of Lords Select Committee on Artificial Intelligence.

Former Google engineer, Yonatan Zunger, has gone further saying that data science now faces a monumental ethical crisis, echoing issues other disciplines have faced in centuries past, with the invention of dynamite by chemist Alfred Nobel, for example, while physics had its reckoning when Hiroshima went nuclear; medicine had its thalidomide moment, human biology the same with eugenics.

Ethics must come before technology, not the other way around

But as history tells us, ethics tends to hang on the tailcoats of the latest technology, not lead from the front. “As recent scandals serve to underline, if innovation is to be remotely sustainable in the future, we need to carefully consider the ethical implications of transformative technologies like data science and AI,” says Josh Cowls, research assistant in data ethics at the Alan Turing Institute.

Ethics will need to be dealt with head on by businesses if they are to thrive in the 21st century. Worldwide spending on cognitive systems is expected to mushroom to about $19 billion this year, an incredible 54 per cent jump on 2017, according to research firm IDC. By 2020, Gartner predicts AI will create 2.3 million new jobs worldwide, while at the same time eliminating 1.8 million roles in the workplace.

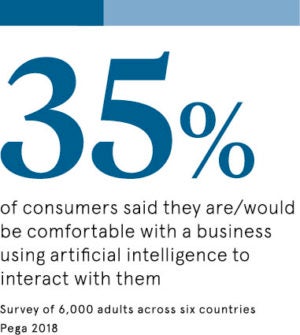

The key concern is that as machines increasingly try to replicate human behaviour and deliver complex professional business judgments, how do we ensure fairness, justice and integrity in decision-making, as well as transparency?

“The simple answer is that until we can clone a human brain, we probably can’t,” explains Giles Cuthbert, managing director at the Chartered Banker Institute. “We have to be absolutely explicit that the AI itself cannot be held accountable for its actions. This becomes more complex, of course, when AI starts to learn, but even then, the ability to learn is programmed.”

Balancing moral rights with economic rights

Industry is hardly an open book; many algorithms are corporations’ best kept secrets as they give private businesses the edge in the marketplace. Yet the opaque so-called “black box AI” has many worried. The AI Now Institute in the United States has called for an end to the use of these unaudited systems, which are beyond the scope of meaningful scrutiny and accountability.

“We also need to look at this from a global perspective. Businesses will need ethical boards going forward. These boards will need to be co-ordinated at the international level by codes of conduct when it comes to principles on AI,” says Professor Birgitte Andersen, chief executive of the Big Innovation Centre.

“Yes, we have individual moral rights, but we shouldn’t neglect the economic rights of society that comes from sharing data. Access to health, energy, transport and personal data has helped new businesses and economies grow. Data is the new oil, the new engine of growth. Data will need to flow for AI to work.”

The UK could take the lead on AI ethics

The UK is in a strong position to take the lead in ethics for AI with calls from prime minister Theresa May for a new Centre for Data Ethics and Innovation. After all, the country is the birthplace of mathematician Alan Turing who was at the heart of early AI thought, and Google’s AlphaGo and DeepMind started here. It was the British who taught Amazon’s Alexa how to speak.

British businesses are also hot on good governance when it comes to many other issues, including diversity and inclusion or the environment. “The country has some of the best resources anywhere in the world to build on this. Leadership on ethics can be the UK’s unique selling point, but there is a relatively narrow window of opportunity to get this right. The time for action on all of this is now,” TechUK’s Mr David concludes.

Holding the people behind AI accountable

Ethics must come before technology, not the other way around