By 2020, with ten billion connected devices, estimated data volumes will be fifty times greater than they were just three years ago, according to Microsoft’s projections. The lamentable paucity of skilled technology talent has been well publicised, but with a gargantuan, and growing, amount of data from which to draw business insights, the gulf between supply and demand for data scientists and analysts already seems unbridgeable.

Organisations are forced to rely on the black box without understanding the logic behind its decision-making and they could risk reputational damage when things go wrong

Capgemini research last year found 64 per cent of organisations view a lack of people with the skills to take advantage of artificial intelligence (AI) as a big barrier to adoption. More recently, the Harvey Nash/KPMG CIO Survey for 2018 goes further by revealing data analytics is where the greatest dearth of tech skills currently lies for global organisations. Indeed, almost half (46 per cent) of the chief information officers quizzed reported that they are experiencing a shortage in this area; a figure that has risen for the last four years.

Off-the-shelf AI tools being used to fill the talent gap

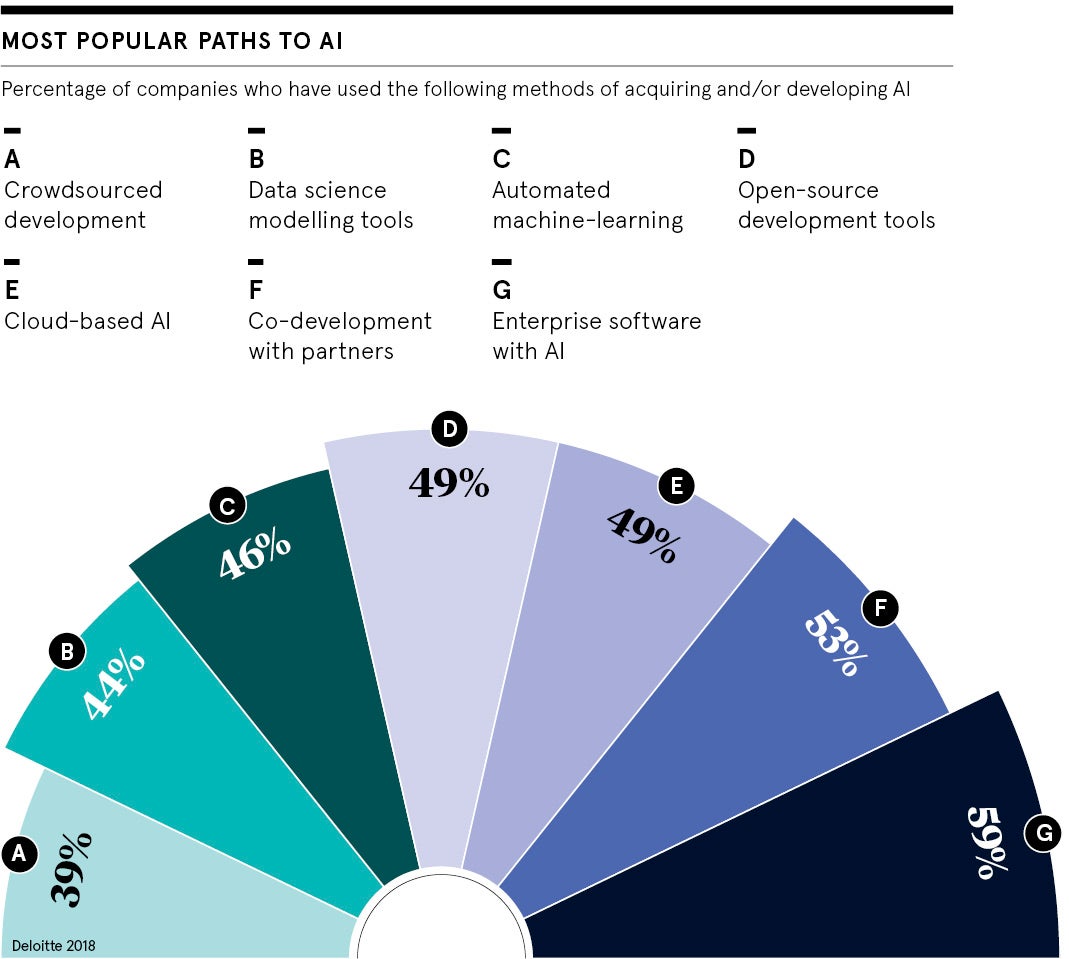

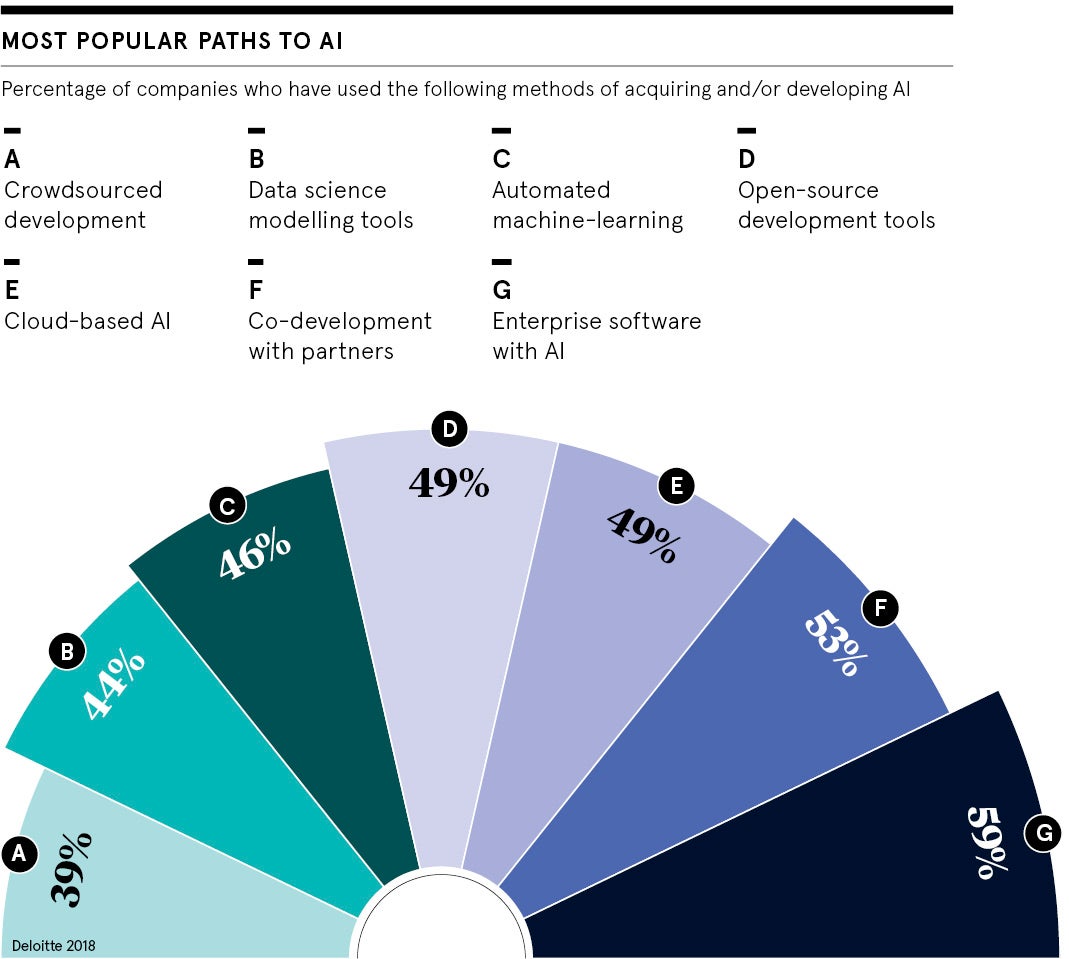

To help bridge this gap and counter the talent challenge, there has been an explosion in the availability of off-the-shelf AI tools that promise to be simple to integrate and straightforward to use. The introduction of pre-built algorithms and open source machine-learning libraries is helping non-experts grapple with organisations’ data and deliver insights through AI.

This democratisation of AI solutions is popular, unsurprisingly when early adopters of AI in the UK have already seen a 5 per cent improvement in productivity, performance and enterprise outcomes, according to Microsoft’s Maximising the AI Opportunity report, published in October.

The trend is so pronounced that Gartner predicts in 2019 workers using self-service analytics will generate more value from data analysis than professional data scientists. “Rapid advancements in AI, internet of things and software-as-a-service analytics are making it easier, and more cost effective than ever before, for non-specialists to perform effective analysis and better inform their decision-making,” says Gartner research director Carlie J. Idoine.

How free online training is democratising AI knowledge

Mark Skilton, professor of practice in information systems and management at Warwick Business School, heralds this newfound accessibility for AI solutions. “Nvidia Jetson hardware products and AI on a chip, which come with a sub-£1,500 cost, bring AI automation to the ‘edge’ of networks for most software developers to start building their robotics and deep-learning models from image, text, voice and data analysis,” he says.

Professor Skilton lauds the “astonishing” amount of often free online machine-learning and AI training on offer from Coursera, Udemy, as well as Stanford and Harvard universities, and many others. “These courses have further democratised that knowledge, bringing AI into the mainstream,” he adds.

Similarly, Liz Sebag-Montefiore, director and co-founder of 10Eighty, a London-headquartered career management and employee engagement organisation, welcomes the drive to democratise AI and lower the barrier for entry. “AI is critical to our success given the current talent challenges we face, so an open and collaborative approach to harnessing such powerful technologies will produce a thriving ecosystem for agile and adaptive enterprises,” she says.

Pre-built AI tools won’t help tackle the talent shortage

However, there are risks to democratising AI; for one, it won’t address the talent shortage and the services of the best data scientists will be more coveted. “Anyone can pick up a hammer, but it requires a skilled tradesperson to use it to build high-quality products,” says Jonathan Clarke, statistical modelling manager for LexisNexis Risk Solutions.

John Abel, Oracle’s vice principal of cloud and innovation, UK and Ireland, agrees. “Businesses should not expect the democratisation of AI technologies to turn employees into effective ‘citizen data scientists’ magically. They need to be coupled with solid training on how to interpret and analyse data properly, as well as robust data governance to make sure the data being used is reliable,” he says.

“This requires trained data scientists to play a critical oversight role in the short term to ensure the proliferation of AI provides businesses with reliable insights in the longer term.”

Kasia Borowska, managing director and co-founder of Brainpool.ai, which boasts a network of more than 300 AI experts for consultancy and strategy, says: “Off-the-shelf AI solutions very rarely work well without customisation. A lot of clients fall into the trap of getting things done quickly and at a lower upfront cost and then spend years fixing the solutions that didn’t end up bringing the desired results.”

Organisations face failure if they can’t understand the tech they use

Additionally, for businesses that fail to either understand or display the workings out of AI programmes, it could have dire ethical and financial consequences, especially since the introduction, in May 2018, of the European Union’s General Data Protection Regulation (GDPR).

“While pre-built algorithms and open source machine-learning libraries can be used to create automated, ‘hands-free’ AI solutions, and produce short-term results, democratising AI in this way can be a dangerous game,” warns Iain Brown, head of data science at SAS, UK and Ireland.

“Organisations are forced to rely on the black box without understanding the logic behind its decision-making and they could risk reputational damage when things go wrong.

“GDPR requirements state that organisations must be able to demonstrate how a decision about a particular customer has been reached, so there must be clear data lineage and explainability to meet compliance requirements.”

Off-the-shelf AI tools being used to fill the talent gap

How free online training is democratising AI knowledge