In The Hitchhiker’s Guide to the Galaxy, writer Douglas Adams describes a “small, yellow, leech-like creature” called the Babel fish which “feeds on brain-wave energy, absorbing all unconscious frequencies and then excreting telepathically a matrix formed from the conscious frequencies and nerve signals picked up from the speech centres of the brain, the practical upshot of which is that if you stick one in your ear, you can instantly understand anything said to you in any form of language”.

Botanists have not discovered anything like the Babel fish, but the science fiction of universal translation is rapidly becoming reality thanks to technological advances.

Most exciting for Hitchhiker fans is the Pilot earbud, backed by $3.5 million in crowdfunding raised by a startup called Waverly Labs.

The company’s chief executive Andrew Ochoa says: “We were really inspired with wearable technology and began working on the idea of a smart earpiece that could solve a global challenge. We were a small team back then, but we all came from different backgrounds and spoke different languages, and that’s how we came up with the idea.”

Pilot combines a number of technologies – capture using speech recognition, machine translation, which includes machine-learning, and speech synthesis into a new language. It is a hybrid of existing, proprietary technologies, but this will be the first time they have been combined into a wearable device.

“The full conversation system is designed to be used by two people who are both wearing a Pilot earpiece. First we set the languages which we’re speaking using the mobile app and the earpieces simply translate what we’re saying,” says Mr Ochoa.

No working Pilot earbud has yet been seen in the wild, but Mr Ochoa says the first version of the app, which provides basic translation, is soon to be released with a fully conversational system and the first commercial devices coming next spring.

Machine translation

Getting machines to do our translation is not new. Systran, a pioneer, was founded in 1968. One of its earliest customers was the US Air Force who needed software to translate Russian into English quickly.

The company is behind many of the best-known examples of machine translation (MT).

In the late-1990s, it partnered with AltaVista to provide the first, free web-based translation service. In a hat-tip to Douglas Adams, this was known as Babelfish.

Technology will be there to help the human, but will not be in competition

Back in 2001, computer-aided design software provider Autodesk began using Systran’s MT to translate customer support documents and the company said at the time it cost 50 per cent less to use a computer than a human. Human translators are naturally concerned that MT may put them out of a job.

Systran’s chief operating officer François Massemin says for many years there has been a battle between machine and human translators. “It was nasty,” he says. “Human translators saw machines as a threat; they took away some of their jobs and cut their margins down.”

Mr Massemin says we are now seeing the rise of augmented translators. “We are reaching a stage where technology will be there to help the human, but will not be in competition,” he says.

He draws an analogy with commercial pilots. “Someone piloting an Airbus is not controlling every second of the flight, but they are offering a guarantee that you will arrive safely at your destination. Similarly, the translator will be the expert driving the translation technology. The control will still be with the human.”

Trials in 2011 for Autodesk found an increase in productivity for translators of between 42 per cent and 131 per cent when they used the open-source Moses MT engine.

Until recently, the two main techniques used have been statistical machine translation (SMT) and ruled-based machine translation (RMT). SMT is based on the analysis of huge volumes of bilingual texts – think Google Translate. RMT, on the other hand, uses grammatical and linguistic rules of languages to help translate.

This year has seen the emergence of NMT, or neural machine translation, with Systran launching its Purely NMT engine in August. This approach uses artificial brains to process entire sentences, paragraphs or documents. As in a human brain, sub-networks handle different parts of the job at the same time; one might extract the meaning, another trained in syntax and semantics enriches this understanding, while a third analyses context.

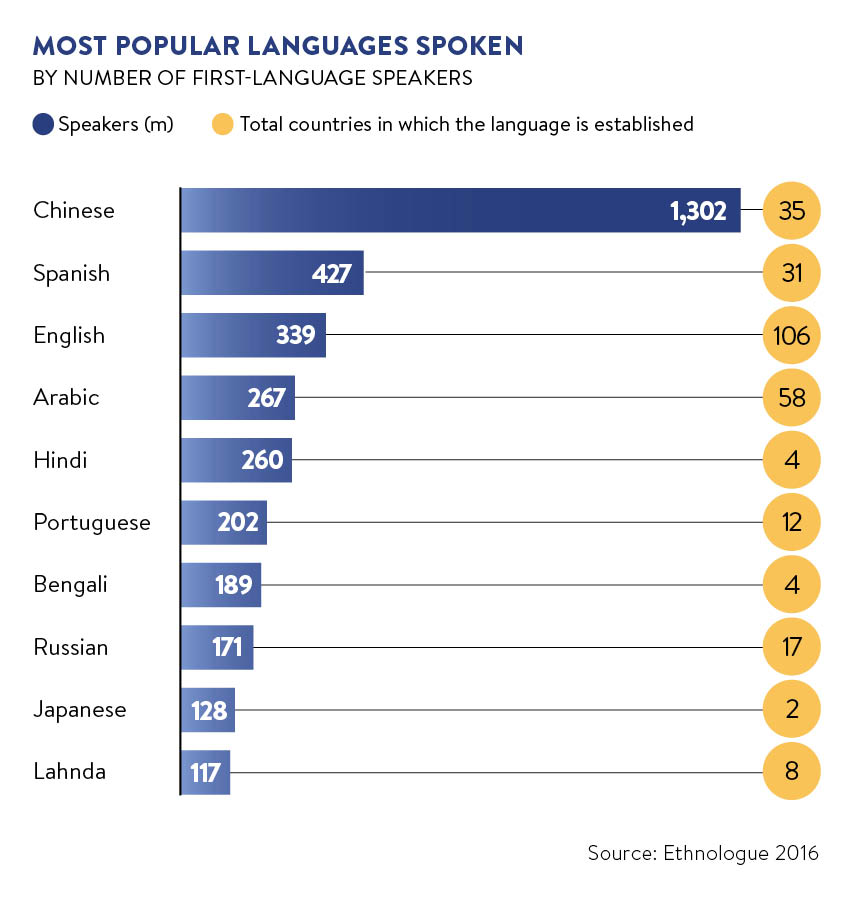

These new developments are challenging the role of English as a lingua franca. Systran’s Mr Massemin points to translations the company is facilitating directly between Chinese and German.

You have to establish a rapport and language is not always the key to that

“We used to have a vernacular language, which is English for historic reasons. Nowadays, I don’t think this is true. Chinese and Arabic are more present than they used to be. English is not sufficient to deal with a global organisation in our view,” he says.

Systran recently started working with German tyre manufacturer Continental. Systran’s chief executive Jean Senellart says: “The engineering team speaks German, but they produce the tyres in Poland, China and Brazil. They have to translate their German into technical Polish, Chinese and Portuguese. They use [human] translators, but their privacy and security is not always as high as it could be, and it creates a bottleneck in the time to market.”

Commercial organisations are not the only ones using MT. Translators without Borders (TWB), a non-governmental organisation (NGO) that helps bodies including Doctors without Borders with translation, is also using MT. Mirko Plitt, TWB’s head of technology, says the NGO has recently helped develop an app to translate Kurdish languages and English.

AI challenges

One of the biggest challenges in getting real-time translation to work in devices such as the proposed Pilot earbud is the way people speak. “Sentences are not completed and they are often repeated,” says Mr Senellart.

There is also the problem of the environment in which the technology has to work. An AI assistant in a quiet home will do much better than a smartphone in a train station.

TWB’s Mr Plitt adds: “If you want to sell abroad, language may seem like the biggest hurdle. Yet once you speak the same language, you still have to be able to sell, to understand your customers and their specific needs. You also have to establish a rapport and language is not always the key to that.”

Even Douglas Adams recognised this. In Hitchhiker’s Guide to the Galaxy, the Babel fish is blamed for causing “more and bloodier wars than anything else in the history of creation”.